Neural search engines are redefining how organizations deliver search experiences by understanding meaning instead of just matching keywords. Powered by deep learning and vector embeddings, these systems interpret user intent, context, and relationships between words to return more accurate and relevant results. Businesses adopting neural search report improvements in engagement, conversions, and operational efficiency. As data volumes grow and user expectations rise, neural search is quickly becoming the standard for intelligent discovery systems.

TL;DR: Neural search engines use artificial intelligence and vector embeddings to understand the meaning behind queries, not just the exact words typed. This allows businesses to provide more accurate, context-aware, and personalized search results. From e-commerce to enterprise knowledge bases, neural search enhances relevance and user satisfaction. Companies that implement it gain a competitive advantage through smarter, faster information retrieval.

Traditional keyword-based search systems rely on direct word matching. If a user searches for “affordable running shoes,” a conventional engine scans for pages containing those exact terms. However, it may miss relevant results like “budget-friendly sneakers for jogging.” Neural search engines, by contrast, understand that “affordable” and “budget-friendly” are closely related concepts and that “running shoes” and “sneakers for jogging” share semantic meaning.

This shift is made possible through natural language processing (NLP), machine learning, and vector search. These technologies allow systems to interpret context, user behavior, synonyms, and even subtle nuances in phrasing.

How Neural Search Engines Work

At the heart of every neural search system is the concept of embeddings. Embeddings convert words, sentences, or entire documents into numerical vectors. These vectors capture semantic meaning, allowing similar concepts to be grouped together in multi-dimensional space.

The process typically involves:

- Text Processing: Content and queries are analyzed and tokenized.

- Embedding Creation: AI models transform text into vectors.

- Vector Indexing: Vectors are stored in a specialized database for fast retrieval.

- Similarity Matching: Queries are matched with the closest vectors based on mathematical distance.

When a user submits a query, the engine does not simply look for exact keyword matches. Instead, it searches for conceptually similar content. This approach improves relevance dramatically, especially when dealing with ambiguous or conversational queries.

Why Traditional Search Falls Short

Keyword-based search systems struggle with:

- Synonyms and semantic variations

- Misspellings and typos

- Long-tail conversational queries

- Context-dependent language

For example, an employee searching an internal knowledge base for “remote work reimbursement” might miss a document titled “home office expense policy.” Neural search solves this gap by understanding the contextual link between remote work and home office policies.

As users become accustomed to conversational AI such as digital assistants and large language models, expectations for smart, contextual responses continue to rise. Companies that fail to modernize search risk frustrating their users and losing engagement.

Key Benefits of Neural Search Engines

Organizations implementing neural search can unlock multiple advantages:

1. Improved Relevance

By interpreting intent instead of relying on literal word matches, neural systems consistently deliver more accurate results.

2. Better User Experience

Users spend less time refining queries and more time engaging with meaningful content.

3. Personalization

Neural models can incorporate user behavior and preferences to tailor results dynamically.

4. Multilingual Support

Advanced models understand cross-lingual relationships, allowing search across languages.

5. Scalability

Vector databases are designed to handle massive datasets efficiently.

Common Use Cases Across Industries

E-commerce: Online retailers use neural search to interpret shopper intent, leading to improved product discovery and higher conversion rates. For instance, a query like “outfit for summer wedding guest” produces curated suggestions rather than keyword-matched listings.

Enterprise Knowledge Management: Large organizations deploy neural search internally to surface policies, documents, and expertise faster.

Media and Publishing: News and content platforms use semantic search to recommend related articles based on topic similarity.

Healthcare: Medical institutions leverage neural retrieval systems to identify related studies, case notes, and treatment guidelines.

Customer Support: AI-powered help centers guide users to relevant FAQs and documentation with high precision.

Leading Neural Search Tools Compared

Several platforms offer neural search capabilities. Below is a simplified comparison chart highlighting popular options:

| Tool | Core Strength | Deployment Type | Best For |

|---|---|---|---|

| Elasticsearch with Vector Search | Hybrid keyword and semantic search | Cloud and self hosted | Enterprises modernizing existing search |

| Algolia NeuralSearch | Fast personalized retail search | Cloud based | E commerce experiences |

| Pinecone | High performance vector database | Managed cloud | AI driven applications |

| Weaviate | Open source vector search | Cloud and self hosted | Developers building AI systems |

| Azure AI Search | Enterprise ready AI integration | Cloud based | Microsoft ecosystem users |

Each solution offers different advantages depending on infrastructure, scalability needs, and technical expertise. Some prioritize hybrid search capabilities that blend keyword precision with vector intelligence, while others focus purely on high-speed similarity matching.

Hybrid Search: The Best of Both Worlds

Many organizations adopt a hybrid search approach, combining traditional keyword indexing with neural retrieval. This method balances precision and contextual understanding. For example:

- Keyword filters ensure exact matches for product codes or legal terms.

- Vector search handles natural language queries and semantic similarity.

This blended technique often produces superior results compared to relying on either method alone.

Challenges and Considerations

Despite its advantages, neural search implementation requires thoughtful planning.

Data Quality: AI systems perform best with clean, well-structured data.

Model Selection: Choosing the right embedding model influences relevance accuracy.

Infrastructure Costs: Vector databases and compute resources may increase operational expenses.

Latency Requirements: Real-time applications require optimized indexing and retrieval.

Organizations must evaluate whether to build custom pipelines or leverage managed services. Integration with existing content management systems and analytics platforms is also critical.

The Role of AI Models in Neural Search

Modern neural search engines rely on transformer-based language models to generate embeddings. These models analyze sentence structure, contextual dependencies, and semantic relationships.

Advancements in large language models have further enhanced neural retrieval. Some systems now incorporate retrieval augmented generation, where a language model generates responses grounded in retrieved documents. This approach powers intelligent assistants and conversational search interfaces.

As models evolve, search engines continue improving in areas such as:

- Context retention

- Question answering accuracy

- Intent prediction

- Zero shot learning across domains

Future Trends in Neural Search

The future of neural search points toward increasingly personalized and conversational experiences. Instead of static result lists, users will interact with AI-driven assistants capable of contextual memory and adaptive learning.

Emerging trends include:

- Voice-integrated search systems

- Real-time personalization based on behavioral signals

- Edge deployment for faster on-device processing

- Cross modal search combining text, image, and audio inputs

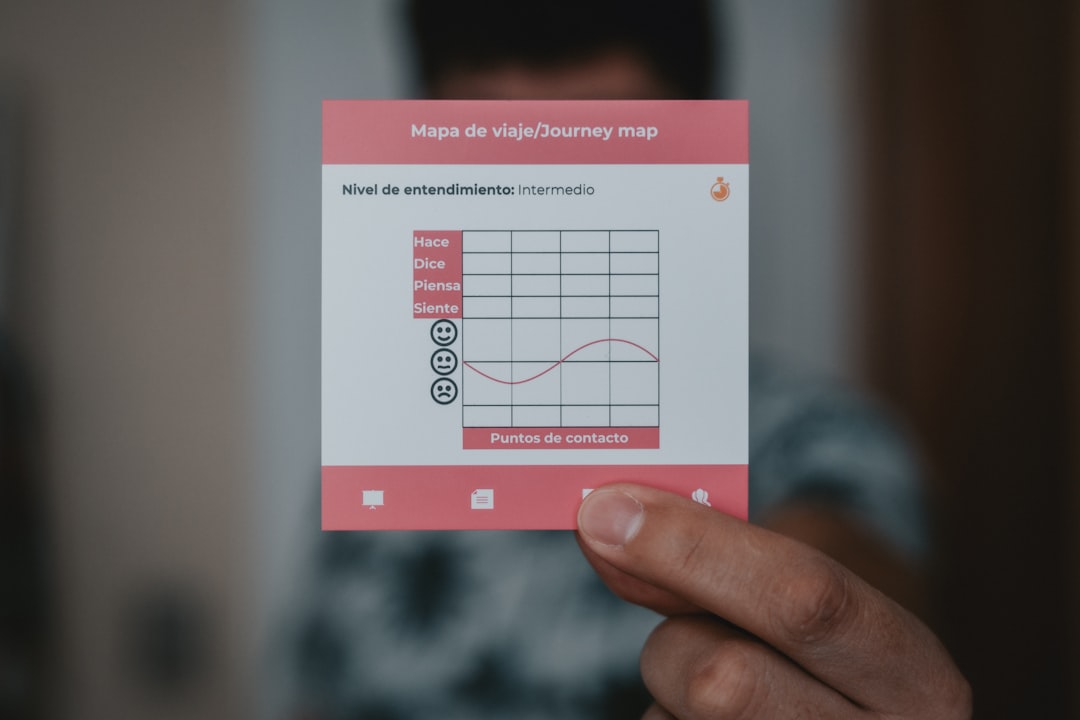

As search systems become more intelligent, businesses will shift focus from simply indexing data to designing meaningful discovery journeys.

Implementing Neural Search Strategically

For organizations considering adoption, a phased approach is often advisable:

- Audit existing search performance and identify gaps.

- Define measurable relevance metrics.

- Experiment with pilot projects using vector databases.

- Integrate hybrid search capabilities.

- Continuously evaluate user behavior and feedback.

Successful implementation requires collaboration between data engineers, AI specialists, product teams, and user experience designers. When executed thoughtfully, neural search becomes a foundational capability rather than a standalone feature.

Conclusion

Neural search engines represent a fundamental evolution in how people discover information. By understanding semantic meaning instead of relying solely on keywords, they deliver smarter, faster, and more personalized results. Across industries, organizations adopting neural search are improving user satisfaction, operational efficiency, and competitive differentiation.

As artificial intelligence technologies continue advancing, neural search will no longer be considered an innovation but an expectation. Businesses that invest early in intelligent retrieval systems position themselves at the forefront of digital experience transformation.

Frequently Asked Questions (FAQ)

1. What is the difference between neural search and traditional search?

Traditional search matches exact keywords, while neural search understands semantic meaning using vector embeddings and AI models.

2. Do neural search engines replace keyword search completely?

Not necessarily. Many organizations use hybrid systems that combine keyword precision with semantic intelligence.

3. Are neural search engines expensive to implement?

Costs depend on infrastructure, scale, and whether managed services are used. However, improved efficiency and engagement often justify the investment.

4. Can neural search handle multiple languages?

Yes. Many modern embedding models support multilingual understanding and cross-lingual search.

5. Is neural search only for large enterprises?

No. With cloud-based vector databases and pre-trained models available, small and mid-sized businesses can also implement neural search solutions.